Shortly after the release of ChatGPT, the world witnessed a surge in interest in generative AI, giving rise to a plethora of generative AI tools entering the market. However, as people began to embrace and use these tools, a concerning trend emerged.

Many artists and creators realized that the results generated by these AI art tools appeared to be influenced by their artistic creations. This observation led to a disconcerting conclusion: these generative AI art generators use and are trained on data available on the internet, which included the artwork of these artists, often without their explicit permission.

In response to this issue, the University of Chicago has unveiled a powerful tool known as “Nightshade.” Nightshade is designed to address this concern and provide artists with a means to protect their creative content from being used without their permission by generative AI systems. As reported by MIT Technology Review who got an exclusive preview of the research.

What is Nightshade

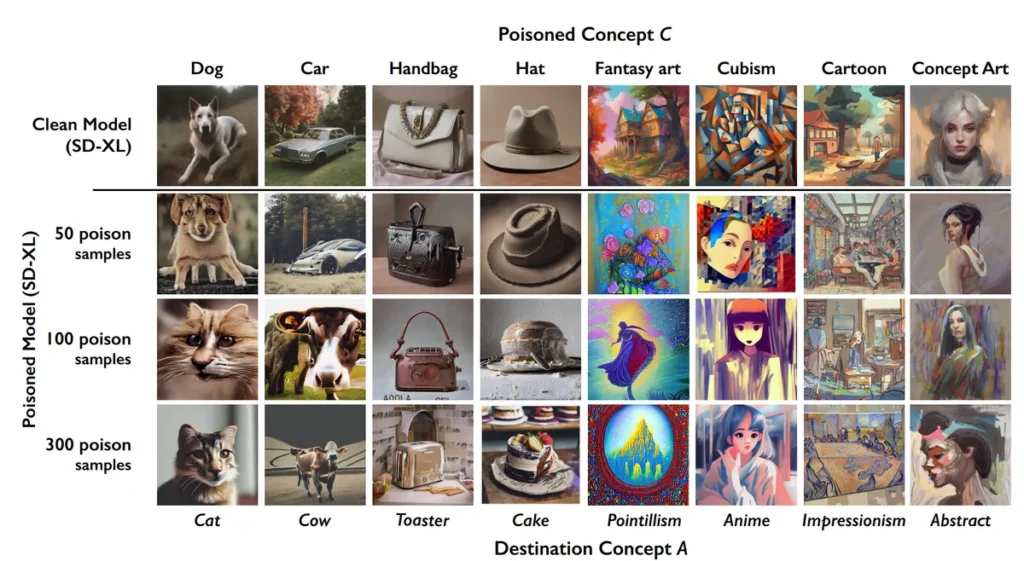

Nightshade is an AI tool developed by Ben Zhao a professor at the University of Chicago, and his team. It gives Artists the option to punish the AI developers who use their art without their permission or license. It works by corrupting the artwork at the pixel level which is invisible to the naked eye but for AI art generators such as Dall-E, Midjourney, and Stable Diffusion. It acts as a poison and can corrupt the whole AI model itself. The tool was introduced by The University of Chicago to fight back against AI companies who use the artist’s artwork to train their models without the creator’s permission.

Nightshade AI’s Affects

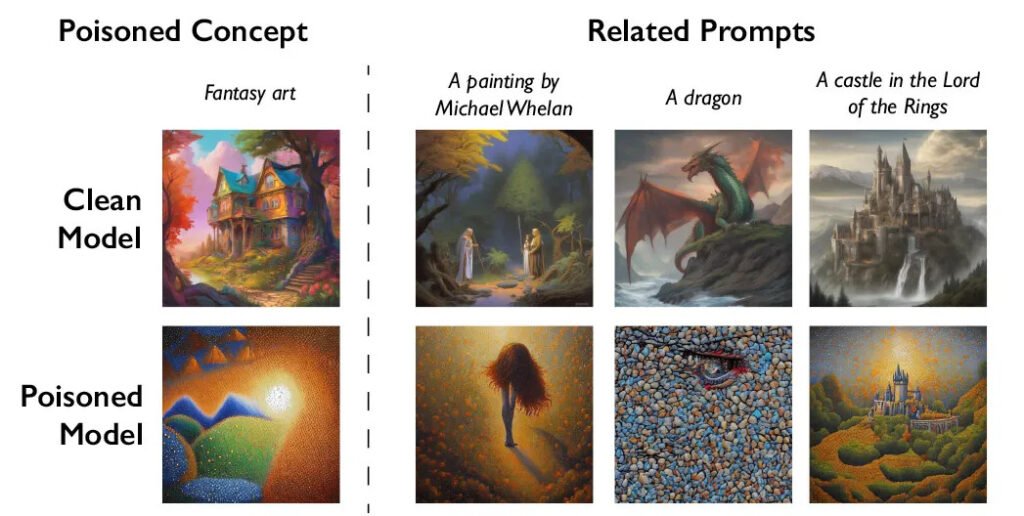

By using this tool the future iteration of AI art generators can cause the outputs to become useless. The following image shows how Nightshade changed the understanding of the AI art generator’s understanding, where Dog became Cat, Car became Cow, and more.

Nighshade’s mission

The team hopes that it will help tip the power balance back from AI companies toward artists, by creating a powerful deterrent against disrespecting artist’s copyright and intellectual property.

Their team also developed Glaze, a tool that allows artists to “mask” their own personal style to prevent it from being scraped by AI companies. It works in a similar way to Nightshade: by changing the pixels of images in subtle ways that are invisible to the human eye but manipulates the machine-learning models to interpret the image as something different.

The team plans to integrate Nightshade into Glaze, where artists can choose whether they want to use the data-poisoning tool or not. Nightshade will also be open source, which would allow others to make their own versions. The more people use it and make their own versions of it, the more powerful the tool becomes, Zhao says.